Meta is adding some of its teen safety features to Instagram accounts featuring children, even if they’re ran by adults. While children under 13 years of age aren’t allowed to sign up on the social media app, Meta allows adults like parents and managers to run accounts for children and post videos and photos of them. The company says that these accounts are “overwhelmingly used in benign ways,” but they’re also targeted by predators who leave sexual comments and ask for sexual images in DMs.

In the coming months, the company is giving these adult-ran kid accounts its strictest message settings to prevent unsavory DMs. It will also automatically turn on Hidden Words for them so that account owners can filter out unwanted comments on their posts. In addition, Meta will avoid recommending them to accounts blocked by teen users to lessen the chances predators finding them. The company will also make it harder for suspicious users to find them through search and will hide comments from potentially suspicious adults on their posts. Meta says will continue “to take aggressive action” on accounts breaking its rules: It has already removed 135,000 Instagram accounts for leaving sexual comments on and requesting sexual images from adult-managed accounts featuring children earlier this year. It also deleted an additional, 500,000 Facebook and Instagram accounts linked to those original ones.

Meta introduced teen accounts on Instagram last year to automatically opt users 13 to 18 years of age into stricter privacy features. The company then launched teen accounts on Facebook and Messenger in April and is even testing AI age-detection tech to determine whether a supposed adult user has lied about their birthday so they could be moved to a teen account if needed.

Since then, Meta has rolled out more and more safety features meant for younger teens. It released Location Notice in June to let younger teens know that they’re chatting with someone from another country, since sextortion scammers typically lie about their location. (To note, authorities have observed a huge increase in “sextortion” cases involving kids being threatened online to send explicit images.) Meta also introduced a nudity protection feature, which blurs images in DM detected as containing nudity, since sextortion scammers may send nude pictures first in an effort to convince a victim to send reciprocate.

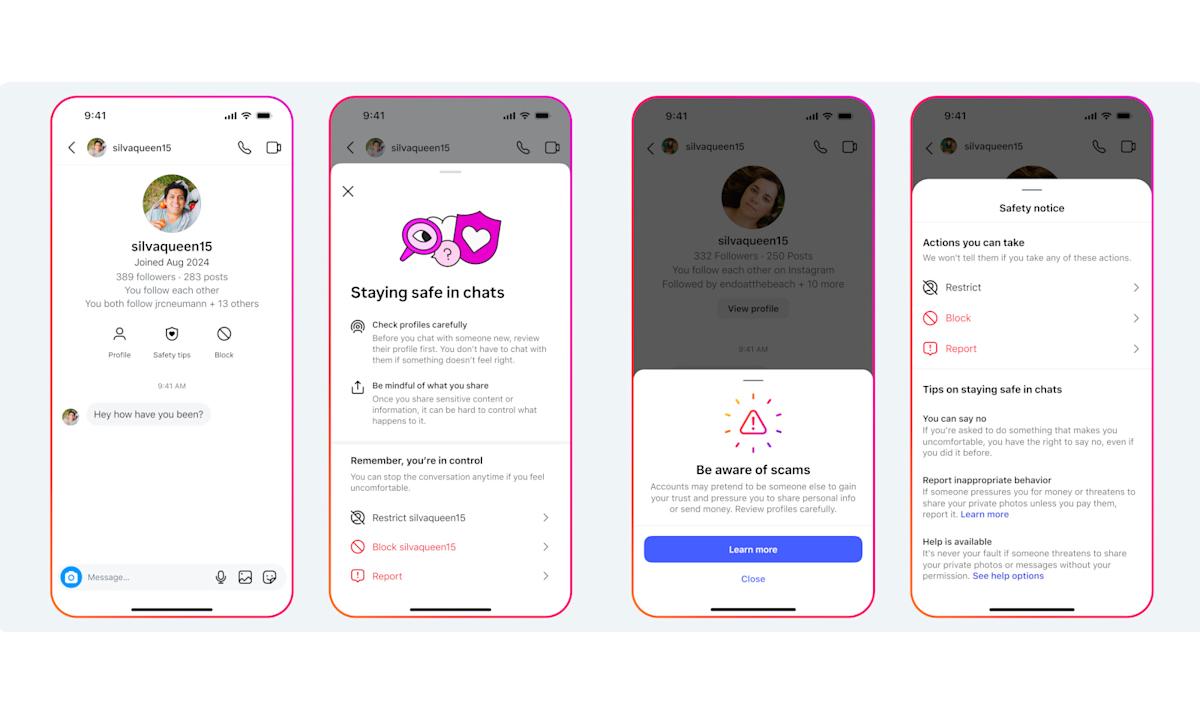

Today, Meta is also launching new ways for teens to view safety tips. When they chat with someone in DMs, they can now tap on the “Safety Tips” icon at the top of the conversation to bring up a screen where they can restrict, block or report the other user. Meta has also launched a combined block and report option in DMs, so that users can take both actions together in one tap.